ANOVA in_Python

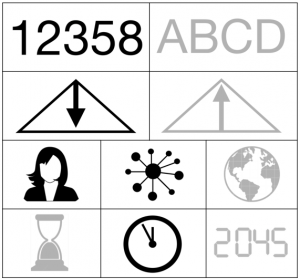

Quantitative - Qualitative

Deductive - Inductive

Individual - System - Global

Past - Present - Future

Note: This entry introduces the implementation of an Analysis of Variance (ANOVA) in Python. Helpful prerequisites are a general understanding of the ANOVA, Boxplots and Histograms and basic Python knowledge. For more on Experiments, in which ANOVAs are typically conducted, please refer to the entries on Experiments, Experiments and Hypothesis Testing as well as Field experiments.

In short: The Analysis of Variance is a statistical method that allows to test differences of the mean values of groups within a sample.

Contents

Introduction[edit]

The Analysis of Variance (ANOVA) by Fisher tests differences of the means of a continuous variable between multiple groups. It extends the t-test to compare more than two groups at the same time. The ANOVA was initially designed for analysing differences in mean values between different treatments within experiments. It is especially useful to analyse field experiments that tame variance through replicates. For example, Fisher used the ANOVA to compare the effect of different fertilizers on plant growth (e.g. Fisher and Wishart 1930). Thus, the ANOVA started out as a deductive approach to test hypotheses with rigid experimental designs. Therefore, the ANOVA builds on strict preconditions. However, nowadays the ANOVA is increasingly used for inductive data, too.

How it works[edit]

The ANOVA tests differences of the means of a continuous variable between multiple groups (factor levels) of one or more categorical variables (factors). For example, we can compare mean tooth length of guinea pigs depending on the vitamin C dose level. More formally, the ANOVA tests the following hypothesis:

- Null hypothesis H0: Nothing is happening, that means the means are not significantly different from each other and differences could be just by chance.

- Alternative hypothesis H1: At least one of the means is significantly different from the others (Crawley 2013).

How does the ANOVA compare means by comparing variances? The ANOVA compares the variation between replicates receiving the same treatment with the variation between different treatments. If the variation between replicates within treatments is small compared to the difference between treatments, it concludes that the treatment means are significantly different. For a more in-depth explanation including plots and underlying formulas, refer to 'The R Book' (Crawley 2013, 498-502).

ANOVAs can be differentiated according to the number of factors:

- One-way ANOVA: An ANOVA with only one factor (e.g. vitamin C dose level).

- Two-way ANOVA: An ANOVA with two factors (e.g. vitamin C delivery method).

Two-way ANOVAs require to look at interactions as well. Statistical interactions mean that the response to one factor depends on the level of another factor (Crawley 2013). For example, the right delivery method might increase the tooth growth only if it is given in a medium dose.

This is the general procedure to doing an ANOVA:

- Inspect and clean the data

- Plot the data with boxplots

- Formulate hypotheses

- Check assumptions

- Run an ANOVA

- Reduce the model

- Inspect the residuals

- Interpret the results

- Run a post-hoc test

In the following, we will go through this process step by step using the ToothGrowth dataset as an example.

Example: effect of vitamin C on tooth growth in guinea pigs[edit]

The ToothGrowth dataset was retrieved from this Rdataset GitHub Repository (‘ToothGrowth’ 2023; original source Bliss 1952). It contains data about the effect of vitamin C on tooth growth in 60 guinea pigs. The dataset contains the following variables (‘The Effect of Vitamin C on Tooth Growth in Guinea Pigs’, n.d.):

- 'len': Length of odontoblasts which are cells responsible for tooth growth (dependent variable).

- 'dose': Each animal received one of three dose levels of vitamin C (0.5, 1, and 2 mg/day).

- 'supp': Each animal received the vitamin C by one of two delivery methods, orange juice (OJ) or ascorbic acid (a form of vitamin C and coded as VC)

Inspect and clean the data[edit]

First, we import any libraries required for analysis and load the ToothGrowth data.

# Import libraries for handling dataframes import pandas as pd # Import libraries for plotting import seaborn as sns import matplotlib.pyplot as plt # Import libraries for running ANOVA and post-hoc test from statsmodels.formula.api import ols from statsmodels.stats.anova import anova_lm from statsmodels.stats.multicomp import pairwise_tukeyhsd

# Load ToothGrowth data url = 'https://raw.githubusercontent.com/vincentarelbundock/Rdatasets/refs/heads/master/csv/datasets/ToothGrowth.csv' df = pd.read_csv(url).iloc[:, 1:] # Display the first few rows df.head()

len supp dose 0 4.2 VC 0.5 1 11.5 VC 0.5 2 7.3 VC 0.5 3 5.8 VC 0.5 4 6.4 VC 0.5

Next, we check if there are missing values and if the data types of the variables are correct.

# Check missing values and data types df.info()

<class 'pandas.core.frame.DataFrame'> RangeIndex: 60 entries, 0 to 59 Data columns (total 3 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 len 60 non-null float64 1 supp 60 non-null object 2 dose 60 non-null float64 dtypes: float64(2), object(1) memory usage: 1.5+ KB

There are no missing values. The data types seem correct, except for one: 'dose' is a factor with 3 levels, but Python currently interprets it as a float, that means a continuous variable. For a correct interpretation as a factor in the following analysis, we need to convert the data type to 'object'.

# Convert dose to a factor

df['dose'] = df['dose'].astype('object')

Plot the data with boxplots[edit]

We can visually investigate differences in tooth length based on the different factors by looking at boxplots.

# Set the figure size to make the plot smaller

plt.figure(figsize=(5, 3))

# Plot tooth length by dose and supp

sns.boxplot(x='dose', y='len', hue='supp', data=df, palette='colorblind')

plt.title('Tooth length by dose level and delivery method')

plt.xlabel('Dose level')

plt.ylabel('Tooth length')

# Customize the legend

handles, labels = plt.gca().get_legend_handles_labels()

plt.legend(handles=handles, labels=['Orange juice', 'Ascorbic acid'], title='Delivery method')

plt.show()

The line in the middle of the box shows the median, that means the 'middle' data point, of this group. 50% of data points lie within the box. As a rule of thumb, if the edge of one box is lower than the median, the two groups are probably significantly different. Following this, most of the different treatment combinations seem to be different from each other, except the orange juice and ascorbic acid in the highest dose.

Formulate hypotheses[edit]

The hypotheses in our example are the following:

- Null hypothesis: Mean tooth length is not significantly different between different (combinations of) dose levels and delivery methods of vitamin C.

- Alternative hypothesis: At least one of the means is significantly different from the others.

Check assumptions[edit]

ANOVA builds on many assumptions:

Independent samples

Each observation comes from a different, randomly sampled participant. For example, we have 60 randomly sampled guinea pigs in the different treatment groups. A violation of the assumption would be if we used the same guinea pig multiple times (without further specifications in our model). This assumption cannot be tested by tests or plots, but needs to hold on conceptual grounds. While slight violations of the other assumptions might be less problematic, a violation of independence means your results will likely be invalid (Ben-Shachar and Singmann 2024).

Equal sample sizes of groups

The sample sizes of the different factor levels should be similar (= balanced design). If this assumption does not hold, there is the option to do a Type 3 ANOVA which can better deal with unequal group sizes.

To test the assumption of equal sample sizes, we can make a contingency table. This displays the number of observations for each of the different combinations of dose level and delivery method.

# Show the number of observations for all treatment combinations pd.crosstab(df['supp'], df['dose'])

dose 0.5 1.0 2.0 supp OJ 10 10 10 VC 10 10 10

We have 10 observations in each group and the design is balanced.

Equal variances of groups

The variances of the different factor levels should be similar.

To test the assumption of equal variances of groups, we can look at the boxplots above. The length of the boxplots is a bit different between the different groups indicating slightly different variances which is not ideal. However, I would argue it is still in a range where we can continue the analysis.

Normal distribution of the dependent variable

The dependent variable should be normally distributed. Ideally, it is normally distributed within each factor level. If this assumption does not hold, you can transform your data (e.g. log-transform right-skewed data) to make it more normally distributed.

To check the assumption of normality, we can plot the tooth length in a histogram. Check out different binwidths because they can give a different impression.

# Set up 2 subplots

fig, axes = plt.subplots(1, 2, figsize=(5, 2.5), sharey=True)

# Plot thooth length with bin width of 4

sns.histplot(data=df, x='len', binwidth=4, ax=axes[0])

axes[0].set_title('Histogram (binwidth 4)')

axes[0].set_xlabel('Tooth length')

axes[0].set_ylabel('Count')

# Plot tooth length with bin width of 5

sns.histplot(data=df, x='len', binwidth=5, ax=axes[1])

axes[1].set_title('Histogram (binwidth 5)')

axes[1].set_xlabel('Tooth length')

# Adjust layout

plt.tight_layout()

plt.show()

This is not ideal, but at least approximately normal. To look at the distribution within each factor level, look at the boxplots above. The more symmetric a boxplot looks, the more normal the distribution. I would say they look fine.

Normal distribution of residuals

The residuals, that means the errors the model makes while predicting, should be normally distributed. In other words, there should be more small errors than large errors. This can only be checked after running the model, so we will come back to that.

Run an ANOVA[edit]

Since we do not severely violate the assumptions, we can now run an ANOVA. In Python, this can be done with the 'statsmodels' package (see example at ‘ANOVA’ 2024 and documentation at ‘Statsmodels.Stats.Anova.Anova_lm’ 2024).

# Run an ANOVA with interactions

formula = 'len ~ C(supp) * C(dose)'

model = ols(formula, df).fit()

aov_table = anova_lm(model, typ=2).round(3).fillna('')

aov_table

sum_sq df F PR(>F) C(supp) 205.350 1.0 15.572 0.0 C(dose) 2426.434 2.0 92.0 0.0 C(supp):C(dose) 108.319 2.0 4.107 0.022 Residual 712.106 54.0

Here, we first specified the formula which tells the model what we want to test. 'len ~ C(supp) * C(dose)' means that we want to explain tooth length by dose level and delivery method. The star * indicates that we want to test for an interaction between dose level and delivery methods, too. The C() tells Python that it should treat these variables as factors. Since we already converted them to the correct data types earlier, this is not really necessary, but it comes in handy if you did not convert your numerical values before. With the formula we can fit a linear model to our data. The linear model is then passed to the anova_lm() function.

Reduce the model[edit]

The next step is to reduce the model because we want to find the most parsimonious or minimal adequate model. Following Occam's razor, the model should be as simple as possible and as complex as necessary. In practice, we only want to include a factor or interaction in the model if it significantly improves the fit of the model (Crawley 2013). One common way of model reduction is reduction by p-values, so we iteratively exclude factors with insignificant p-values (> 0.05). In our case, we start by looking at the interaction term 'C(supp):C(dose)'. Since it is significant, we do not need to further reduce the model, but have already found the minimal adequate model.

Inspect the residuals[edit]

Before looking at the results, we should check if the residuals are normally distributed by looking at a histogram.

# Extract the residuals from the model

res = model.resid

# Set up 2 subplots

fig, axes = plt.subplots(1, 2, figsize=(5, 2.5), sharey=True)

# Plot the residuals with bin width of 3

sns.histplot(res, binwidth=3, ax=axes[0])

axes[0].set_title('Histogram (binwidth 3)')

axes[0].set_xlabel('Residuals')

axes[0].set_ylabel('Count')

# Plot the residuals with bin width of 4

sns.histplot(res, binwidth=4, ax=axes[1])

axes[1].set_title('Histogram (binwidth 4)')

axes[1].set_xlabel('Residuals')

# Adjust layout

plt.tight_layout()

plt.show()

The residuals are a bit right-skewed, but I would say this is still okay.

Interpret the results[edit]

Now, the most important question, what does it mean?

# Show the ANOVA results again aov_table

sum_sq df F PR(>F) C(supp) 205.350 1.0 15.572 0.0 C(dose) 2426.434 2.0 92.0 0.0 C(supp):C(dose) 108.319 2.0 4.107 0.022 Residual 712.106 54.0

The significant p-values for both supp and dose tell us that the tooth length significantly differs between different dose levels and delivery methods. Furthermore, the significant interaction term means that the response of tooth length to the delivery method also depends on the dose level (or vice versa). This can also be seen in the boxplots above: on a medium dose level, there is a difference in tooth length between orange juice and ascorbic acid, but on the highest dose level the two delivery methods lead to the same tooth length. You can also look at the sum of squares which tell you how much variation is explained by the factors. We can see that the dose level explains a majority of the variation in our data.

# Extract the sum of squares values for the model and residuals

SS_model = aov_table['sum_sq'].iloc[:-1].sum()

SS_residual = aov_table['sum_sq'].iloc[-1]

# Total sum of squares

SS_total = SS_model + SS_residual

# Explained variance

explained_variance = SS_model / SS_total

print(f'Explained Variance: {explained_variance:.3f}')

Explained Variance: 0.794

The explained variance (sum of squares of the model / total sum of squares incl. residuals) shows that our model (delivery method, dose level and their interaction) explains about 80 % of the variation in tooth length.

Run a post-hoc test[edit]

To get a more detailed view of the results, we can run a post-hoc test which compares every factor level against every other factor level.

# Combine supp and dose into a single grouping variable df['group'] = df['supp'] + '_' + df['dose'].astype(str) # Run Tukey HSD test tukey = pairwise_tukeyhsd(endog=df['len'], groups=df['group'], alpha=0.05) print(tukey)

Multiple Comparison of Means - Tukey HSD, FWER=0.05 ====================================================== group1 group2 meandiff p-adj lower upper reject ------------------------------------------------------ OJ_0.5 OJ_1.0 9.47 0.0 4.6719 14.2681 True OJ_0.5 OJ_2.0 12.83 0.0 8.0319 17.6281 True OJ_0.5 VC_0.5 -5.25 0.0243 -10.0481 -0.4519 True OJ_0.5 VC_1.0 3.54 0.264 -1.2581 8.3381 False OJ_0.5 VC_2.0 12.91 0.0 8.1119 17.7081 True OJ_1.0 OJ_2.0 3.36 0.3187 -1.4381 8.1581 False OJ_1.0 VC_0.5 -14.72 0.0 -19.5181 -9.9219 True OJ_1.0 VC_1.0 -5.93 0.0074 -10.7281 -1.1319 True OJ_1.0 VC_2.0 3.44 0.2936 -1.3581 8.2381 False OJ_2.0 VC_0.5 -18.08 0.0 -22.8781 -13.2819 True OJ_2.0 VC_1.0 -9.29 0.0 -14.0881 -4.4919 True OJ_2.0 VC_2.0 0.08 1.0 -4.7181 4.8781 False VC_0.5 VC_1.0 8.79 0.0 3.9919 13.5881 True VC_0.5 VC_2.0 18.16 0.0 13.3619 22.9581 True VC_1.0 VC_2.0 9.37 0.0 4.5719 14.1681 True ------------------------------------------------------

The Tukey HSD test shows the p-values and mean differences between all the different factor levels. You can use the boxplots above to check in a more visual way what these numbers mean. Looking at the post-hoc test and boxplots, orange juice and a high dose generally lead to longer teeth. However, some groups do not differ, for example, there is no difference between orange juice and ascorbic acid when given in the highest dose.

So, what do you do if the teeth of your guinea pigs do not grow enough? You give them 1 mg vitamin C per day in the form of orange juice or 2 mg vitamin per day in the form of orange juice or ascorbic acid. As you can imagine, the reason for this experiment was not a concern about tooth growth in guinea pigs, but a much more severe problem. During the second world war, the Canadian government was concerned about how to provide natural sources of vitamin C to its soldiers. The experiment used tooth growth in guinea pigs as an estimation of the intake of vitamin C from different sources to determine the best delivery method (Crampton 1947). Thanks to our ANOVA we can say that they hopefully went for orange juice if they were only able to deliver a medium dose, whereas the delivery method does not matter for the highest dose level.

Normativity, strengths and challenges[edit]

Since you can read up on the normativity, strengths and challenges in the general ANOVA entry, here are some related reflection questions that you might want to think about when doing an ANOVA:

- Considering the level of complexity of your data and the ANOVA assumptions, is the ANOVA the most parsimonious approach, or do you need a more complex model (e.g. a generalized linear model to deal with non-normally distributed data)?

- Are the assumptions of the ANOVA met (even if you use inductive data)?

- Which worldview underlies the constructed categories you analyse?

- Is there good reason to assume a causal dependence between your variables?

- Which approach do you want to use for model reduction?

- What are the limitations of your analysis?

- How do your results add to parts of a larger picture of knowledge?

Outlook[edit]

The ANOVA is a staple in deductive designs and can still be the most parsimonious approach for some analyses. It also builds the ground for more complex models like mixed effect models. Therefore, being able to do ANOVAs in Python will also help you to understand these more advanced models. For an outlook on the ANOVA more generally, please refer to the ANOVA entry.

Further reading[edit]

Crawley, Michael J. 2013. The R Book. 2nd ed. Hoboken, N.J: John Wiley & Sons Inc.

References[edit]

‘ANOVA’. 2024. Statsmodels. 3 October 2024. https://www.statsmodels.org/stable/anova.html.

Ben-Shachar, Mattan S., and Henrik Singmann. 2024. ‘Testing the Assumptions of ANOVAs’. The Comprehensive R Archive Network. 1 September 2024. https://cran.r-project.org/web/packages/afex/vignettes/assumptions_of_ANOVAs.html.

Bliss, C. I. 1952. The Statistics of Bioassay. Academic Press.

Crampton, E.W. 1947. ‘The Growth of the Odontoblasts of the Incisor Tooth as a Criterion of the Vitamin C Intake of the Guinea Pig’. The Journal of Nutrition 33 (5): 491–504. https://doi.org/10.1093/jn/33.5.491.

Crawley, Michael J. 2013. The R Book. 2nd ed. Hoboken, N.J: John Wiley & Sons Inc.

Fisher, Ronald Aylmer, and John Wishart. 1930. ‘The Arrangement of Field Experiments and the Statistical Reduction of the Results’. https://doi.org/10.23637/ROTHAMSTED.8V20Y.

‘Statsmodels.Stats.Anova.Anova_lm’. 2024. Statsmodels. 3 October 2024. https://www.statsmodels.org/stable/generated/statsmodels.stats.anova.anova_lm.html#statsmodels.stats.anova.anova_lm.

‘The Effect of Vitamin C on Tooth Growth in Guinea Pigs’. n.d. The Comprehensive R Archive Network. Accessed 16 December 2024. https://search.r-project.org/R/refmans/datasets/html/ToothGrowth.html.

‘ToothGrowth’. 2023. Csv. GitHub: vincentarelbundock/Rdatasets. https://github.com/vincentarelbundock/Rdatasets/blob/f58caff6458204b33c44ef35976e555b316af5c4/csv/datasets/ToothGrowth.csv.

The author of this entry is Wanja Tolksdorf. Last edited: 7.11.2025